SAL (Sigasi AI Layer, in case you’re wondering) is the name of the integrated AI chatbot in Sigasi Visual HDL.

There are three ways to get a conversation with SAL started.

First, by clicking the SAL icon in the Activity Bar icon.

Second, by choosing “Chat with SAL: Focus on Chat with SAL View” from the Command Palette (opened with

Ctrl-Shift-P by default).

Finally, by selecting a piece of HDL code and using the context menu SAL > Explain This Code.

Note: Through SAL, you can connect to a remote model using the OpenAI API, such as OpenAI’s GPT 4 model, or a local AI model of your choice via LMStudio.

Configuring your AI model

SAL is configured using up to four environment variables. Be sure to set them before starting Sigasi Visual HDL, so they get picked up correctly. The easiest way to get started it by connecting to the OpenAI servers, as detailed below. If you prefer to use a model made by another company, or you’re working on an airgapped machine, you’ll have to set up a local model.

Connecting to remote OpenAI services

If you have a working internet connection and an OpenAI API key,

configuring the backend for SAL is as easy as setting the environment variable SIGASI_AI_API_KEY to your API key.

By default, this will use the GPT 3.5 Turbo model.

export SIGASI_AI_API_KEY="your-openai-api-key"

You can use a different model by setting the SIGASI_AI_MODEL to e.g. gpt-4-turbo.

export SIGASI_AI_MODEL="gpt-4-turbo" # with the GPT 4 model

export SIGASI_AI_API_KEY="your-openai-api-key" # with this API key

Connecting to a local model

This guide will help you use LM Studio to host a local Large Language Model (LLM) to work with SAL. Currently, SAL supports the OpenAI integration API, and any deployed server utilizing this API can interface with SAL. You need to set the correct URL endpoint and model name, and optionally provide the API key if required by the endpoint.

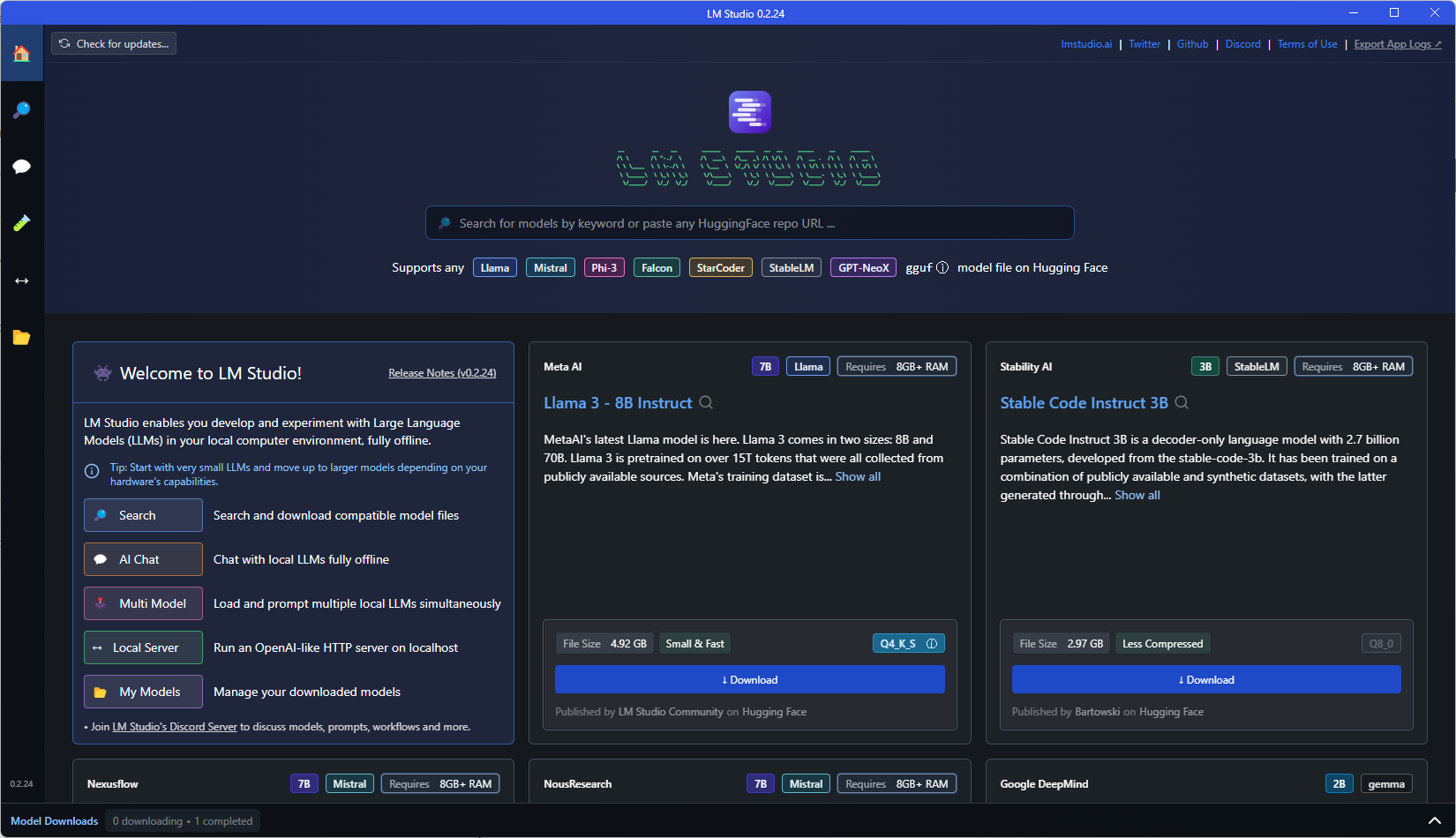

Download the latest version of LM Studio .

Open LM Studio. From the home screen, search and download the model you want to use.

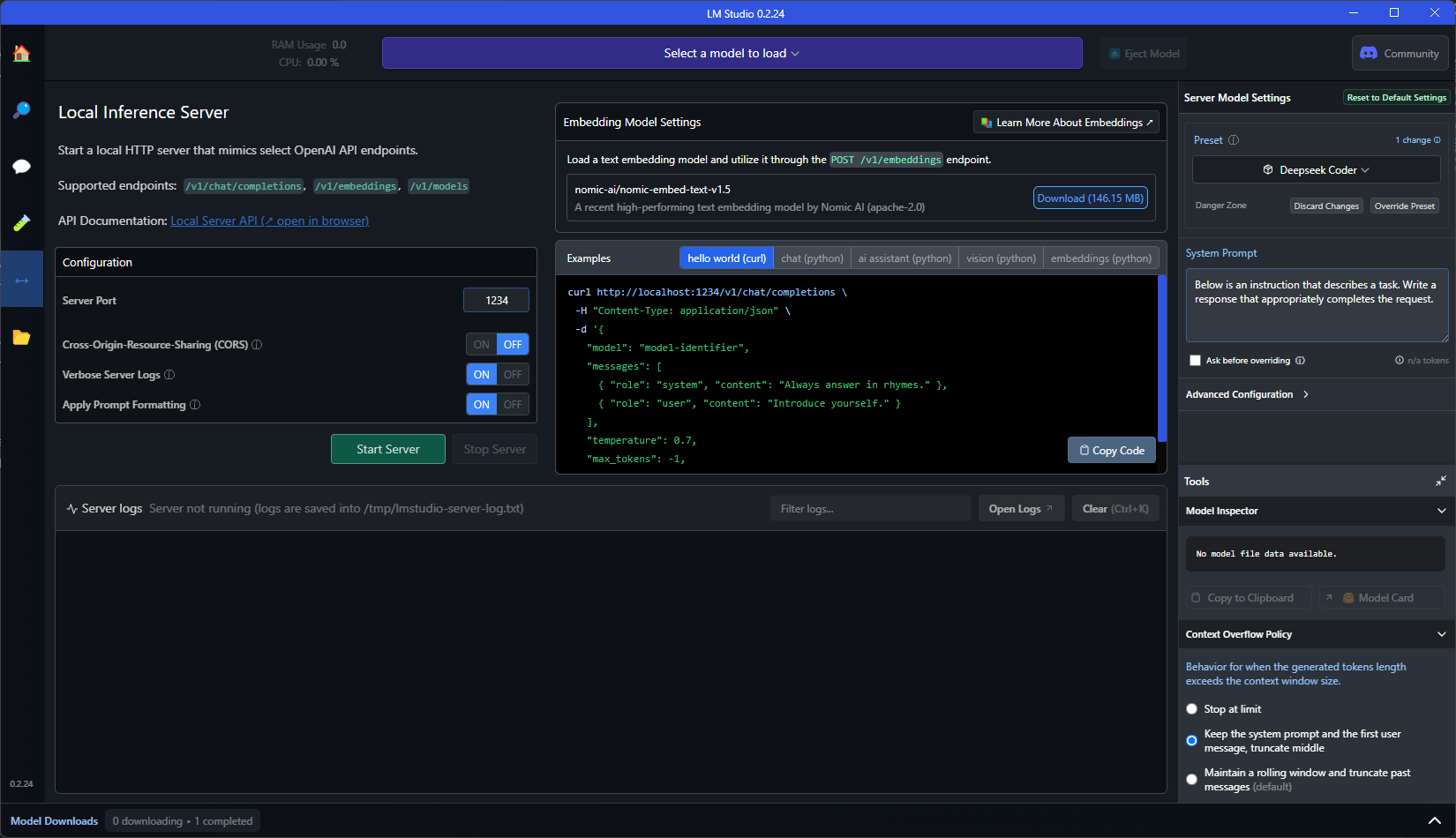

Navigate to the Local Server Window.

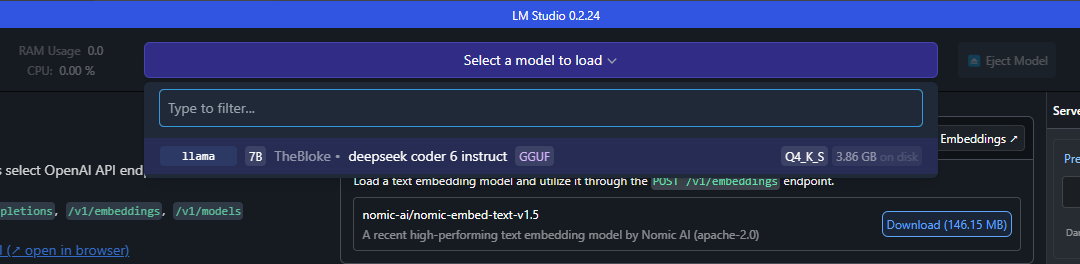

At the top of the local server screen, use the selection menu to choose the model you wish to load.

(Optional) Configure local server parameters, such as the Context overflow policy, the server port and Cross-Origin-Resource-Sharing (CORS).

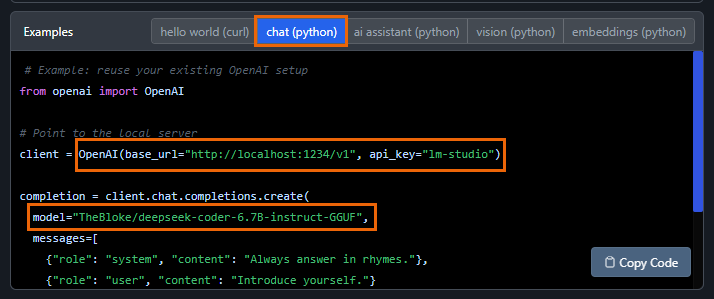

Set the environment variables for Sigasi Visual HDL. You can get the necessary configurations most easily in the Example window under chat (python).

SIGASI_AI_API_URLto the base URLSIGASI_AI_MODELto the model nameSIGASI_AI_API_KEYto the API key (if required)

In this example:

export SIGASI_AI_MODEL="TheBloke/deepseek-coder-6.7B-instruct-GGUF" export SIGASI_AI_API_URL="http://localhost:1234/v1" export SIGASI_AI_API_KEY="lm-studio"Launch Sigasi Visual HDL and start a conversation using your configured local LLM.